We’ve spent decades working on what good code looks like. Don’t repeat yourself. Keep functions small. Reduce cyclomatic complexity. Low coupling and high cohesion. These rules were derived from hard earned experience with what makes code maintainable by humans.

Then we handed the keyboard to an AI, and some of those rules became unnecessary.

The rules were always for us

The DRY (Don’t Repeat Yourself) principle is a heuristic for human memory. When a developer writes the same logic twice, they’ll eventually change one copy and forget the other. Bugs compound. The rule exists because humans lose track.

It is possible for an AI to never lose track. If there are ten duplicate blocks, it could search and change all ten in one pass. The cognitive overhead that made duplication dangerous no longer applies.

This doesn’t mean duplication is now good. Specially in a moment we are learning how to work together. It just means we’re measuring what we are used to.

Another example is how added friction can impact the developer experience. In Rust that friction is known as “fighting the borrow checker”. From the documentation :

However, this system does have a certain cost: learning curve. Many new users to Rust experience something we like to call ‘fighting with the borrow checker’, where the Rust compiler refuses to compile a program that the author thinks is valid. This often happens because the programmer’s mental model of how ownership should work doesn’t match the actual rules that Rust implements. You probably will experience similar things at first. There is good news, however: more experienced Rust developers report that once they work with the rules of the ownership system for a period of time, they fight the borrow checker less and less.

An AI writing Rust doesn’t mind. It can invest the extra inference cycles, try and fail, and iterate until the compiler is satisfied. What was a cognitive load on human attention is now just a slower generation step.

The new scarcity is correctness

If AI can handle compiler friction, and duplication is less of a liability, what actually matters? Correctness.

Not in the colloquial sense of “it runs without crashing”. Correctness in the formal sense: the program provably does what it claims to do, under all conditions, with no edge cases left unverified.

We are facing a new language design challenge. Some researchers, like Victor Taelin , are already thinking about what a programming language built for AI agents looks like. Strong typing, strict memory models, and formal verification built into the language itself. Not as optional tools, but as first-class constructs.

The insight is counterintuitive: features that made languages harder for humans are exactly the features that make AI-written code trustworthy. An AI doesn’t need ergonomic syntax or gentle learning curves. It needs a language that makes correctness checkable.

The human’s job has changed, and our tools haven’t (yet)

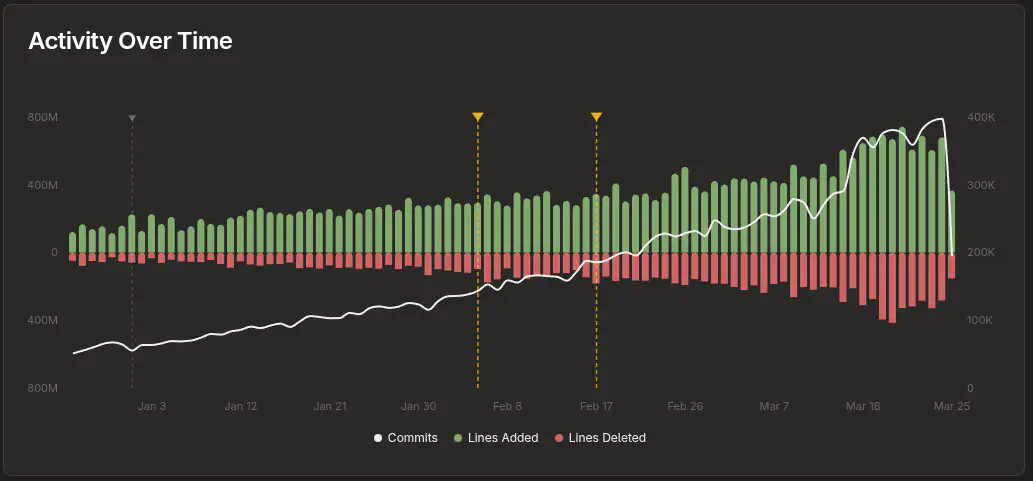

I have already written about how AI is changing the abstraction level at which programmers operate . AI is increasingly writing more code. For instance, 600M lines of code are added (and signed) by Claude every day . And this is not considering other models or commits that are not tagged.

The software architecture takes its toll. Not because AI is bad at writing code… it isn’t. But because the AI optimizes for the immediate task, as far as the user prompt goes. It doesn’t spontaneously ask “should I extract this into a shared utility?” or “this function is starting to do three things”. Left unguided, it will duplicate logic across files, inflate cyclomatic complexity in long functions, and produce correct-but-tangled code that becomes expensive to change later.

The human directing the AI is still responsible for the architecture. But they’re doing it without instruments. We need new tooling and IDEs focused on code changes, architectural decisions and risks. This will help the human (and the AI) write better code. Some ideas to put this into practice:

- Duplication detection. Find the same logic in multiple places. Surface collisions to the user, or direct the AI to rework the functionality.

- Complexity signals. Flag functions with rising cyclomatic complexity. Create a plan for restructuring the function.

- Diff analysis. Analysis of code diffs to direct user attention to high risk areas.

None of this requires the AI to be smarter. It requires us to instrument the process.

Two levers, one problem

Writing code will be completely transformed and we need to adapt our way of working. At the language level, there’s an opportunity to design new languages tailored to AI agents. At the tooling level, the opportunity is to create the “harness” that keeps humans in control of what AI agents build. Directing the scarce human attention to the right places.

Coding rules didn’t disappear. They translated. And the translation is still in progress.